Tech News from cloudscale

The GPU servers from cloudscale.

For LLM, AI etc.

Autor: Markus Furrer - cloudscale

"KI" ist heute in aller Munde. Die Technologie weckt Hoffnungen für die Anwendung in den unterschiedlichsten Lebensbereichen – und bestimmt hast auch Du schon Ideen, was Du mit intelligenten Tools alles verbessern kannst. Viele Bausteine dafür sind im Internet frei verfügbar, und mit den neuen GPU-Servern von cloudscale hast Du nun auch die nötige Rechenleistung, um mit dem passenden Model Vollgas zu geben.

The new GPU flavors at cloudscale

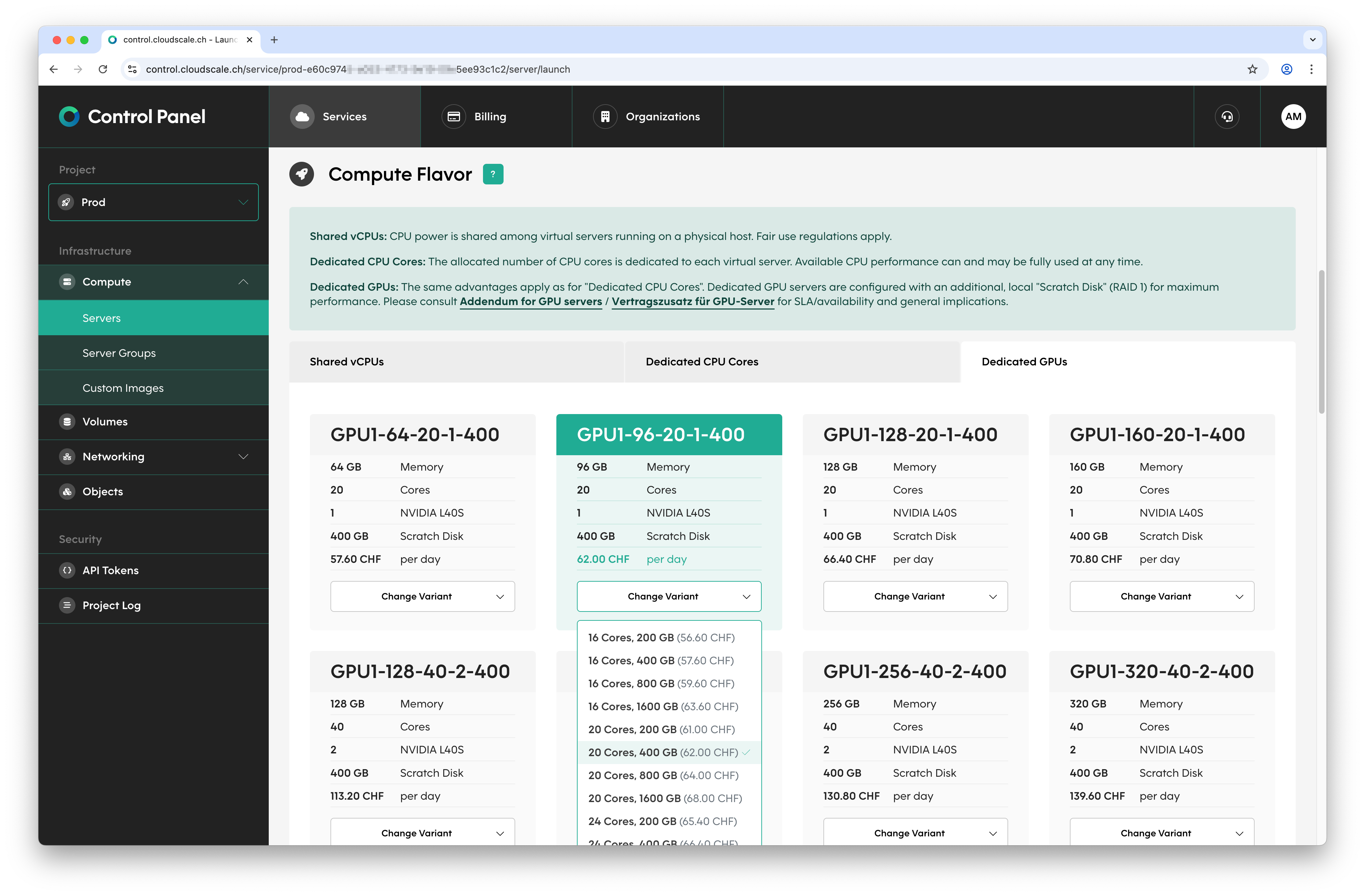

You can now also use virtual servers with GPUs at cloudscale. When launching a new server, simply choose one of our GPU flavors. Just like with the existing Flex and Plus flavors, you can choose between different CPU and RAM configurations. In addition, depending on the flavor, your server is assigned one to four physical GPUs. The GPU flavors also include a local scratch disk – more on that in a moment.

The new GPU flavors are designed for maximum performance. Accordingly, they are based on our proven Plus flavors: the selected number of CPU cores is dedicated exclusively to your virtual server, and you are free to fully utilize them 24/7. The same applies to the GPUs: one or more NVIDIA L40S GPUs provide concentrated computing power for your workloads – the GPUs are passed through directly to your virtual server as full PCI devices.

A new element: the scratch disk

From the very beginning, the virtual disks of your servers at cloudscale have been stored in our Ceph-based storage clusters. This means they are always immediately available, regardless of which physical machine your virtual server is currently running on, and these volumes (except for the root volume) can be moved between virtual servers. The trade-off is a certain amount of latency: read and write operations travel over network connections and therefore – despite 100 Gbps links – take noticeably longer than with locally installed NVMe disks.

In day-to-day use, most access operations are often concentrated on a small part of the dataset, which can be kept in a cache if necessary. Since LLMs and similar workloads can behave differently in this regard, our GPU servers come with a local so-called scratch disk. This storage sits on NVMe disks directly inside the physical machine on which the virtual server runs, thereby offering minimal latency. To protect against failures, the data is also stored redundantly in a RAID 1 setup.

Operationally, this setup brings a few special considerations. When moving GPU servers to another physical machine – which, because of the GPUs, is only possible while the server is powered off and not live – the contents of the scratch disk must also be transferred, which takes a certain amount of time. Moving your GPU server may, for example, be triggered when scaling or become necessary when maintenance work has to be carried out by us.

In the event of (hardware) issues, GPU servers are restarted on another physical machine depending on availability. However, you should assume that you will receive a new, empty scratch disk in such a case. Please therefore use the scratch disk only for data whose complete loss can be tolerated at any time, and regularly copy any intermediate results to another storage location.

A look at the development

Our GPU servers have been available to selected customers since the end of February, and the feedback has been overwhelmingly positive. While gathering initial practical experience, we have also made various improvements – in some cases even to OpenStack, the open-source project on which our setup is based. Wherever possible and appropriate, we will of course contribute our enhancements back upstream to the respective projects.

These improvements include the ability to expand the scratch disk afterward – up to 1,600 GB is available locally, in addition to the usual volumes in our storage clusters. We have also disabled data compression when moving the scratch disk between physical machines; with our internal 100 Gbps network, it makes sense to avoid that overhead. And for the SSH connection opened for the transfer, we have ensured that the ciphers used benefit from the CPUs’ AES support.

Now it’s your turn

When creating a new virtual server in our Cloud Control Panel, you will find the GPU flavors under the Dedicated GPUs tab. Please use the please contact support link once and send us the key details of your planned use case; as an attachment, we require the signed Contract Addendum for GPU Servers. After a manual review, we will enable the GPU flavors for your desired project.

If you do not yet have a specific use case but would still like to chat with your own chatbot, Lukas makes it easy to get started. In our Engineering Blog, he shows you step by step how to install Ollama and DeepSeek-R1 70B at cloudscale and make them accessible via the web. One tip: our NVIDIA L40S GPUs have 48 GB of memory per GPU; to avoid a drop in performance, choose enough GPUs so that your selected model fits entirely into GPU memory.

Our new GPU servers with current NVIDIA L40S GPUs and local scratch disk deliver maximum performance for your LLM and AI workloads. After a one-time enablement, you can start, scale, and delete GPU servers at any time in self-service via the Control Panel or API. And of course, as is typical with cloudscale, you benefit from per-second billing with no fixed costs and Swiss data residency. At the moment, however, availability is limited: first come, first served.